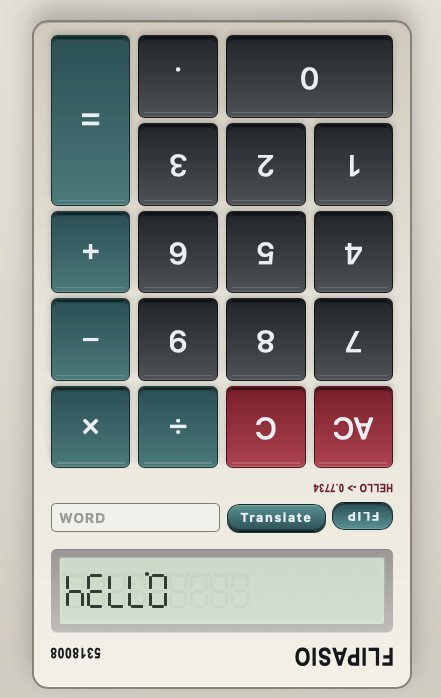

My eldest daughter recently discovered the classic LCD calculator trick: type certain numbers, flip it, and make it spell words. She was thrilled to show me, and I was just as excited watching her find that little “useless” easter egg that entertained me during way too many hours in classes that I should’ve paid more… Continue reading Flipasio

Best of

A curated selection of Daniel Pradilla articles on technology, software, work, and practical problem-solving.

EchoTree

For years now I kept some of my social accounts alive with a Python script. It read from a collection of RSS feeds and shared at random times. It worked, my feeds stayed active, and people asked me what was I doing reading at 3am. The script had a bit of personality: it logged out… Continue reading EchoTree

5 lessons I learned playing with Clawdbot, a local agentic assistant

I’ve spent the last few weeks playing with Clawdbot. My instance is named Clawd. If you haven’t seen this category yet: think “chat assistant”, but with hands. It can run commands, write files, poke your integrations, and generally do the annoying glue-work you normally do by tab-switching and copy/pasting. TL;DR Clawdbot lets you go from… Continue reading 5 lessons I learned playing with Clawdbot, a local agentic assistant

Classifying fruits with a Convolutional Neural Network in Keras

I followed a tutorial on Convolutional Neural Networks that left many questions unanswered. Soon I realized that the actual process of architecting a Neural Network and setting the parameters seemed to be much more experimental than I thought. It took a while to find explanations that a rookie like me could understand. Most of the… Continue reading Classifying fruits with a Convolutional Neural Network in Keras

Readability scoring of the United Nations Corpus

Imagine you could estimate how hard would be to read a document, before reading it. Imagine you could do it for entire batches of documents you need to process. Imagine you could have a recommender system that would help you prioritize unread documents according to their difficulty. A bit of experimentation with the public United… Continue reading Readability scoring of the United Nations Corpus